A 100 score from automated testing feels good. Your dashboard turns green. The report says you passed every check. It looks complete. On paper, everything looks compliant. It is the kind of result that gets shared in Slack, checked off in a ticket, and filed away as “resolved.”

But that score does not mean people can use your site.

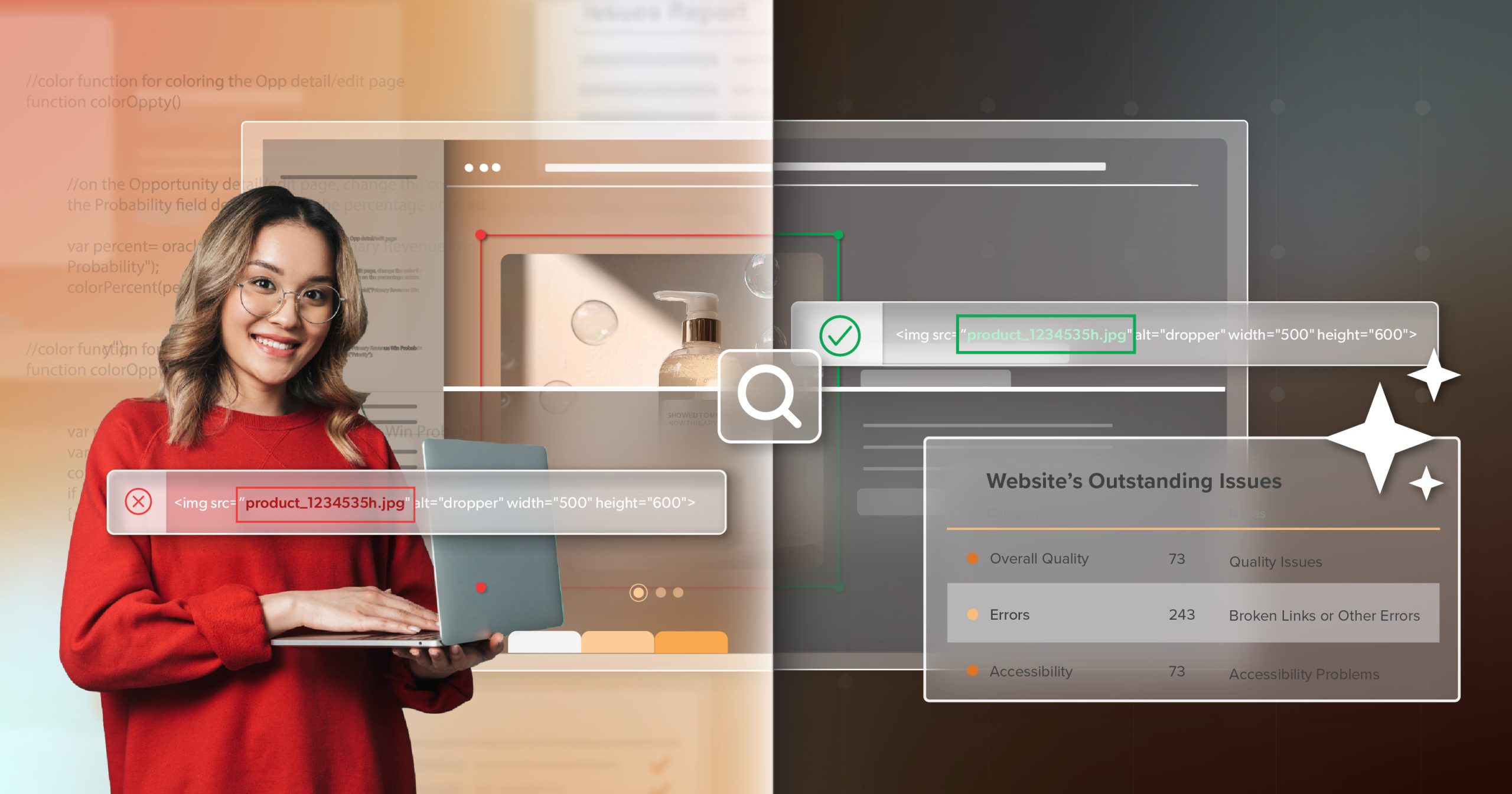

Most automated testing tools are helpful. They catch real barriers and save time. The problem is what they cannot measure. In practice, automated checks tend to cover only a slice of accessibility—roughly 30 percent—because they are limited to what can be evaluated programmatically. The remaining work involves interaction, context, and human judgment. As standards evolve and legal expectations keep tightening, you have to be honest about whether the metrics you rely on still tell the truth—for your business and for your users.

Here is where automated testing leaves gaps that can turn into barriers for users and real exposure for your team.

What Google Lighthouse Checks (and What It Doesn’t)

Google Lighthouse is an open-source tool that audits a web page and reports on several quality signals—most commonly performance, SEO, and accessibility. It is widely used because it is easy to run, easy to share, and it produces a single score that feels objective.

As an accessibility tool, though, Lighthouse is limited.

How Lighthouse Calculates Your Accessibility Score

Like all automated accessibility tests, Lighthouse can miss barriers that affect users (false negatives). It can also flag patterns that are not actually barriers in context (false positives). That is not a knock on Lighthouse. It is a reminder that the tool is only as reliable as what can be measured from code alone.

When Google Lighthouse scores accessibility, it runs a set of pass-or-fail checks and assigns weights to each one. Your final score is a weighted average, which means some failures carry much more impact than others.

A clear example is severe ARIA misuse. Putting aria-hidden=”true” on the body element is heavily weighted because it removes page content from the accessibility tree. When that happens, a screen reader user may not be able to perceive the page at all. Lighthouse penalizes this hard, and it should.

Where Lighthouse Scores Stop and User Experience Starts

Notice what that scoring model reinforces. Lighthouse is evaluating machine-detectable code patterns. It is not validating the full user experience—whether a flow makes sense, whether focus order matches intent, whether labels hold up in context, or whether an interaction is usable with assistive technology.

Google’s own guidance is clear: only a subset of accessibility issues can be detected automatically, and manual testing is encouraged. That is not a minor disclaimer. It defines the boundary of what the score means.

If you use the score as a proxy for accessibility, you are using it outside its intended purpose.

How Automated Accessibility Testing Evaluates Your Site

Automated testing is built for consistency and repeatability. It excels at spotting structural issues that follow well-defined rules. In practice, that usually means it flags things like:

- Missing alt attributes on images

- Low color contrast ratios based on numeric values

- Form fields with no programmatic label

- Empty buttons or links with no text alternative

- Missing language attributes on the html element

- Obvious ARIA errors that break the accessibility tree

Why “Pass” Does Not Mean “Helpful”

Color contrast is another great example. A tool can measure foreground and background values, calculate the ratio, and report whether it meets the Web Content Accessibility Guidelines (WCAG) requirements. For example, SC 1.4.3 Contrast Minimum requires a 4.5:1 ratio for normal text. That matters for users with low vision and color vision differences.

Contrast is another place where automated tools fall short. They can measure color contrast ratios, but they cannot evaluate readability in context. They cannot tell whether your font size and weight work well with that contrast choice, whether visual styling creates confusing groupings in navigation, or whether users can scan the page and understand it easily.

That pattern shows up across most automated checks. Tools confirm that something is present in code; they do not confirm how well it works in context. The scan focuses on individual elements rather than the interactions between them, on static states rather than the workflows people have to move through.

That coverage is useful, but it is thin. It reaches only a narrow slice of accessibility. The rest sits in the gap that automation cannot reach.

The Limits of Automated Accessibility Testing

The issues that stop people usually sit outside what automation can prove. They show up in behavior and context, not in markup alone. That is how a site can “pass” and still fail users.

Keyboard Navigation and Focus Visibility

A tool can confirm that an element is focusable and that a label exists. It cannot verify what using the page with a keyboard actually feels like.

You still need to know:

- All interactive elements can be reached by pressing Tab.

- Focus indicators stay visible and easy to follow.

- Complex widgets like date pickers, autocomplete fields, and modal dialogs work correctly with keyboard-only navigation.

Those answers do not come from scanning markup. Keyboard testing requires human interaction and someone who understands how keyboard users move through web pages.

Screen Reader Output and Meaning

Automation can confirm that text alternatives and labels are present. It cannot confirm what a screen reader announces, in what order, and whether that output is useful in context.

This is where “passes” hide confusion. A tool cannot tell whether the alt text says “image123” or “Yum yum” for a product photo. Both satisfy the requirement. Only one helps a user.

A label can exist but be announced in a way that does not match the visible interface. Alt text can be technically present and still add noise instead of clarity. Errors can appear visually and never be announced at all. The code can look correct while the experience still breaks.

Screen readers also differ. NVDA, JAWS, VoiceOver on macOS, VoiceOver on iOS, and TalkBack all interpret markup in slightly different ways. Automated testing does not account for those differences. It assumes a static model of accessibility, while users operate in dynamic environments.

Understanding, Language, and Cognitive Load

Tools do not measure whether your interface is understandable. They do not know when instructions are dense. They do not notice when terminology shifts from one step to the next or when navigation labels do not match what the page is actually doing.

Key questions stay unanswered:

- When someone scans the page, can they tell what to do next, or is it buried in jargon and extra complexity?

- If they make a mistake, do they have a clear way to recover, or are they forced to start over?

- As users change text size or zoom, does the layout hold together, or does it fall apart?

- For people with cognitive disabilities, do your interface patterns feel consistent and understandable?

Why Manual Accessibility Testing Still Sets the Standard

Automated checks can tell you whether patterns exist in your code. They cannot tell you whether those patterns work when a person tries to complete a task with assistive technology.

Manual testing is where you find the failures that stay invisible in a report. It is also where you verify that “accessible” holds up across the tools people actually use.

In audits, we test with NVDA and JAWS on Windows, VoiceOver on macOS, VoiceOver on iOS, and TalkBack on Android. These tools do not behave the same way, even when the markup looks clean. We also test keyboard-only navigation, voice control, and zoom. Each component is evaluated against a checklist of over 260 items for full WCAG 2.2 coverage.

This is often where perfect automated scores stop feeling meaningful. Forms can look correct on paper, yet labels that technically announce still fail voice control because the spoken target is unclear. Mobile layouts may meet target size rules, while the placement makes taps unreliable. Dynamic regions can update with no announcement at all, so screen reader users lose the thread. Navigation might be valid in markup and still be hard to use when landmarks are noisy, vague, or missing where people expect them.

Manual testing connects those details back to the actual job a user is trying to do.

The Cost of Relying Only on Automated Accessibility Tests

Teams that stop at automated testing tend to learn about the remaining issues the hard way. A user hits a blocker, reports it, and now the problem is public. That carries reputational risk, can become legal risk, and often lands on your team as an urgent disruption instead of planned work.

It is also avoidable.

The cost curve is clear. A full audit that includes manual testing is typically cheaper than defending a claim, rebuilding components that shipped without assistive technology constraints in mind, or patching accessibility after customers have already churned. Teams sometimes rebuild the same feature more than once because the first pass did not account for how screen readers announce changes or how voice control targets labels.

Automated testing is a starting point. A perfect score is baseline hygiene worth maintaining. It is necessary, and still nowhere near enough.

Combining Automated and Manual Accessibility Testing

Lighthouse scores and perfect automated testing results create false confidence. Genuine accessibility depends on both automated and manual testing. Automated checks belong in your everyday development pipeline, catching structural issues early and guarding against regressions. But don’t stop there. Manual testing with assistive technology then fills in the rest of the picture, showing whether people can actually complete tasks.

A better approach is to treat automation as the first pass, but manual testing as the standard of proof. Run automated tests early and often, then make space for keyboard checks, screen reader passes, and voice control scenarios before you sign off on a release.

If you want help putting that kind of testing strategy in place, 216digital can work alongside your team. Schedule an ADA Strategy Briefing with our experts to review your current workflow, understand your risk, and design an accessibility testing plan that pairs automated coverage with focused manual testing where it counts most.